You Get What You Spec

I wrote several hundred thousand lines of code that did what I wanted. The problem: I wrote several hundred thousand lines of code that did exactly what I wanted.

Each service built cleanly. Every test suite passed. The API contracts matched. The TypeScript compiled. By every per-service metric, the implementation was solid. Then I deployed to Kubernetes and things didn't line up.

The Integration Wall

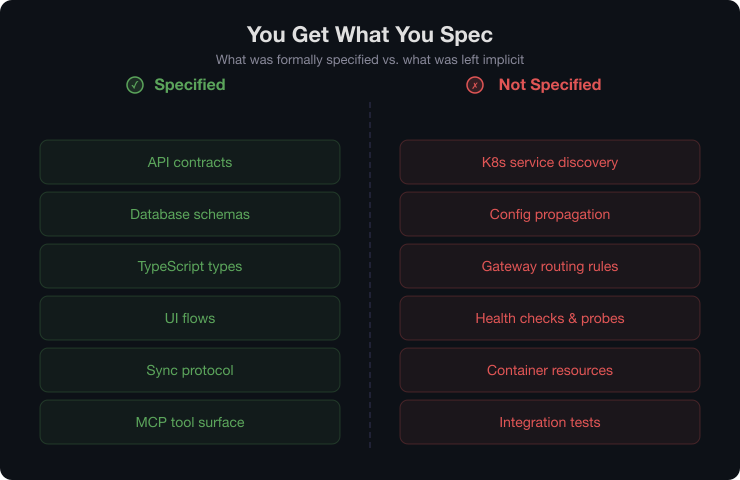

My interface specs were detailed about build-time contracts:

- API endpoints, request/response shapes, auth flows (OpenAPI 3.1)

- Database schemas and migration sequences (DDL)

- Shared TypeScript types across packages

- UI component trees, state management, navigation flows

My interface specs said nothing about runtime contracts:

- How services discover each other in K8s (DNS, service names, ports)

- How config propagates (environment variables, secrets, ConfigMaps)

- How the API gateway actually routes to backend services (ingress rules)

- How health checks, readiness probes, and liveness probes behave

- Container resource limits and scaling policies

Each agent built its service assuming "the network works." Because that assumption wasn't in the spec, the agent wasn't wrong — it just wasn't complete. Every service worked perfectly in isolation. None of them could find each other.

Why This Happened

I knew I had a gap here. I understood that I didn't fully understand K8s networking, service discovery, and config management. But I'd been deep in specification work for a week — writing OpenAPI schemas, debating data models, designing sync protocols — and I was tired of speccing. I had a loose idea of the deployment story and was optimistic I could figure it out when I got there.

This was an error, and a specific one: I didn't write the interface spec for "how services interact at runtime" with the same rigor I used for "how the API works." If I had specified conventions like:

- Every service exposes

/healthzon port 8080 - Service-to-service calls use

http://{service-name}.{namespace}.svc.cluster.local - All config comes from a ConfigMap named

{service-name}-config - Secrets mount at

/etc/secrets - Each Helm chart includes standard labels, resource limits, and probes

...then every agent would have built to those constraints. The 866 commits would have included the deployment contracts. The integration wall would have been lower — maybe not zero, but much lower.

The Solo Developer Trap

On a team, someone's deployment expertise catches what the API designer missed. A DevOps engineer reads the spec and says "where's the health check contract?" A platform engineer asks "how do services discover each other?"

When it's one person plus agents, the agents share your blind spots because they work from your specs. There's no one to ask the questions you didn't think to ask. The agents will confidently implement exactly what you specified and nothing more.

The Fix

The process is exactly as good as your information architecture. Runtime contracts need the same rigor as build-time contracts.

For future projects:

- Deployment contracts per service. A spec template that forces you to define the port, health endpoint, environment variables, upstream dependencies, and resource requirements — not just the API.

- A platform networking spec. A separate document defining service mesh topology, DNS conventions, ingress rules, and mTLS requirements. This is a cross-cutting concern that doesn't decompose into per-service specs.

- A configuration spec. Which values are per-environment, which are per-service, how secrets are injected. Written once, referenced by every service spec.

- Earlier integration testing. Don't wait until all 14 repos are built to test them together. Deploy the first service and the API gateway at Layer 1 (scaffolding). Catch the gaps when there's one service, not fourteen.

The Strongest Evidence

This failure is actually the strongest evidence for the approach, not against it.

The agents did exactly what the specs told them to do. The specs were thorough about build-time concerns and vague about runtime concerns. So the build-time story is solid and the runtime story has gaps. That tells you exactly where the human's attention needs to go.

The fix isn't smarter agents. It's better specs.