The Context Window Made Me a Better Engineer

Getting an AI agent to write a feature is more or less a solved problem. Plan, implement, review. Give the agent a clear spec, let it build, verify the output. That loop is how you get value out of an agent.

The part that surprised me is that this isn't specific to writing code. AI requires you to document your thought process. The agent can only pick up what you put down. With careful forethought, the same plan-implement-review pattern applies to the project management that makes a large venture succeed or fail.

When Talking to Claude Isn't Enough

A good starting point for solving any problem is to just start talking to the agent. Give it context, tell it what you're trying to solve, what constraints you're under, what outcomes you want. It's rubber duck debugging where the duck talks back. Agents are good at rotating the idea space — exploring different angles on a problem that you might not have considered.

But this conversational approach breaks down in three ways:

-

Sycophancy. Over a long conversation, the agent stops challenging its earlier conclusions. Ideas that were tentative become assumptions, assumptions become constraints, and the conversation narrows until the agent is just elaborating on a direction nobody questioned. It can't filter signal from noise in its own history. The fix is an adversarial agent with fresh context: feed it a summary of the conversation's results with instructions to pick it apart. Claude is surprisingly good at reviewing Claude's work.

-

Bottleneck. You can only maintain so many simultaneous conversations at once. This is fine for high-touch work like early design, but becomes a wall when you need to implement 38 plans across a single service. You can't have 38 ongoing conversations.

-

Scale. You just can't fit everything you need to do in a single context window. Acorn has 47 REST endpoints, 23 database tables, a sync protocol, six UI modules, and an MCP tool surface for an AI research agent. No single conversation can hold all of that. You need a mechanism to break the system apart and ensure the pieces make sense on their own and in concert.

Fractal Development

The prime limitation of an LLM is that it can only handle so much at once. But that constraint forces you to approach problems sensibly.

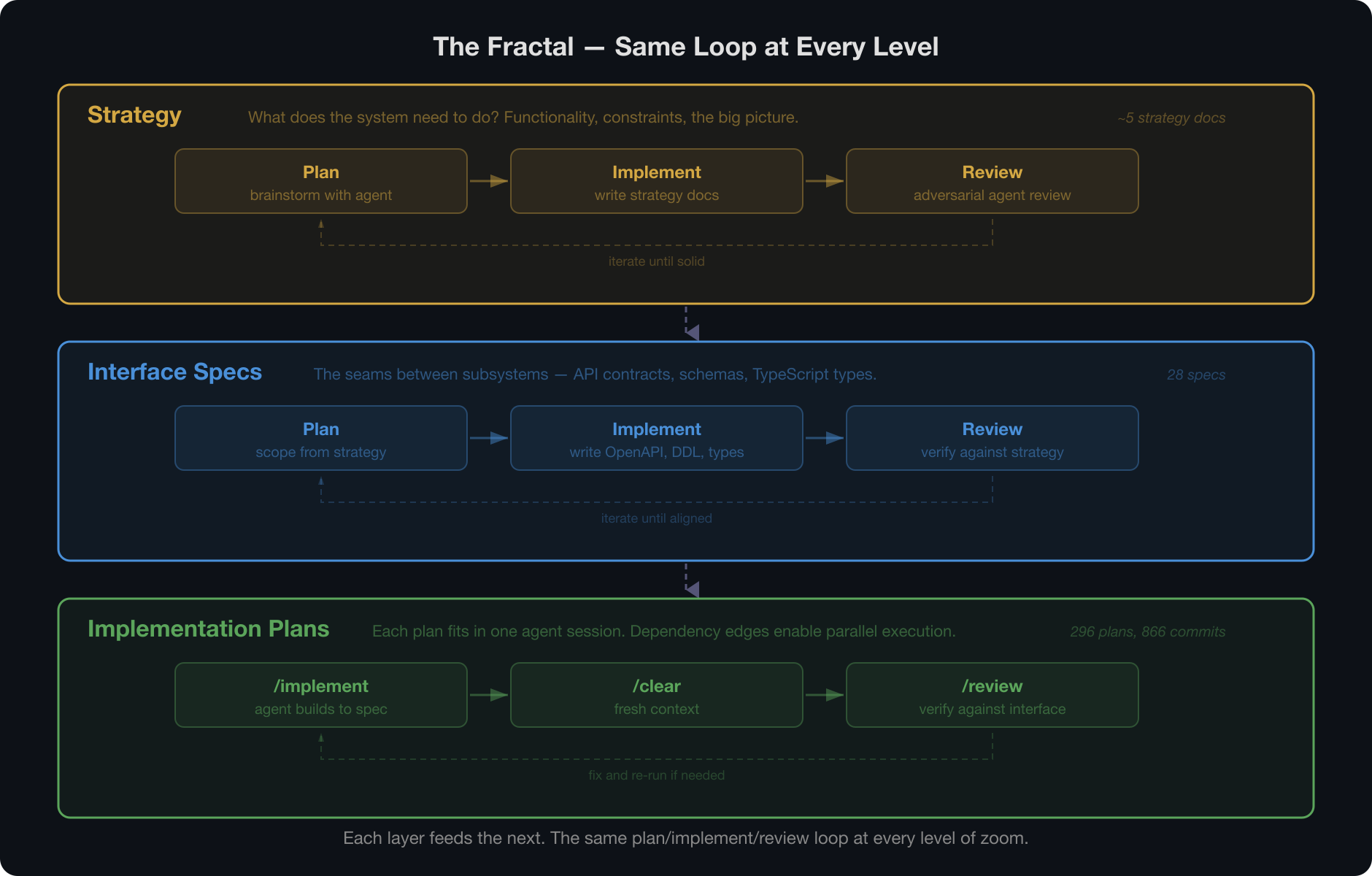

I know — "fractal decomposition" sounds like something a consultant charges $500/hour to say. But the idea is simple. The same plan, implement, review loop works at every level of zoom:

- Strategy documents for what the system needs to do. Functionality, operational constraints, the big picture.

- Interface specs that define the seams between subsystems — backend, frontend, infrastructure — with clearly defined contracts that let you reason about each piece independently.

- Implementation plans within each subsystem, small enough to fit in a single agent session.

Each layer uses the same loop. My strategy docs were largely the output of conversations with an agent, but I could then review them with an adversarial agent — fresh context, clear instructions to find gaps — to identify things I'd missed. Once the strategy was solid, I used it as a base to build out 28 interface specs for the various components of the system. As anyone who's done project planning knows, there's give and take — fleshing out details pokes holes in the original strategy — but the strategy served as a valuable guide.

From there, I broke the interfaces down into 296 plans, each with dependency edges capturing what needed to be built first. Each plan is an implementation spec with inputs and outputs designed to be manageable within half a context window (I find that Claude isn't great at judging the size of its window, so providing wiggle room gives better results). The interfaces served as ground truth for reviewing the plans and ensuring alignment.

Finally, I implemented the plans in parallel. The process boiled down to an /implement [plan] command, a /clear

to get fresh context, and a /review to verify the implementation against the spec. At this level the loop is

mechanical, but if you squint, you can see the same pattern in each of the layers above.

Here's a concrete example. One of Acorn's 28 interface specs was the Cloud API — the core backend service that manages people, trees, sources, and evidence. That single spec defined 47 REST endpoints across 6 domain entities, a PostgreSQL schema with 23 tables, and the auth model for multi-tenant access. From that spec, Trellis decomposed the work into 38 implementation plans: schema migrations, repository layers, service logic, API handlers, middleware, and test harnesses — each with explicit input/output contracts and dependency edges. Those 38 plans ran across 64 agent sessions over two days. Each session loaded the interface spec from cache, built its piece, and exited. No session knew about the others. They didn't need to — the spec was the shared memory.

Not a New Idea

If you step back, this is modular design applied to ideas rather than code. The Unix Philosophy argued that small programs doing one thing well make software easier to reason about. Microservice architecture applied the same insight to distributed systems. As software developers, we've spent decades learning to encapsulate behavior behind clean interfaces so we can think about pieces independently.

The principle isn't new. The new thing is that it applies to the act of building, not just the thing being built. You decompose the work the same way you decompose the system. LLMs let us treat freeform text with the same level of abstraction we've always applied to code. They automate things that were not previously automatable.

As Billy Vaughn Koen wrote:

The engineering method is the use of heuristics to cause the best change in a poorly understood situation within the available resources.

AI doesn't change the method. It adds a powerful new heuristic. And the context window, the constraint that seems like a limitation, is what forces you to apply it well.