D'ya Like DAGs?

I've been reading for a while that "the cost of building software has dropped 90%". If you listen to people on LinkedIn, a single person with a Claude subscription can reproduce entire SaaS apps from scratch:

I don't buy it. Setting aside the complexity of building something secure and maintainable — it's hard to come up with good ideas! AI is an information forklift. It lets you lift orders of magnitude more information than you could manually. But like other power tools, it's dangerous without good process. Anyone who's used an agentic coding tool for more than a weekend knows: small things go fast, complex things make a mess.

Rebuilding something that already exists — a slack clone, a re-implementation of Next.js, a C compiler — is a solved problem on a long enough timeline. Given a working test suite, an agent can chew through and make the tests pass. The more interesting question is whether AI can build something novel. Something where the output itself has to be figured out — where you can't just point an agent at a spec that already exists and say "make it pass."

So I decided to test this. Not with a weekend project — with something genuinely complex. Something that would stress the process enough to see where it breaks.

Acorn

My parents are extremely into genealogy. I've watched them wrestle with platforms that lock their research behind walled gardens, don't encourage rigorous methodology, and make collaboration painful. So I set out to build something better — a "GitHub for Genealogy." Open APIs, the Genealogical Proof Standard baked into the workflow, real collaboration with attribution, data ownership, and an AI research assistant that could help search records and identify gaps in your evidence.

That's a fully featured platform: a cloud backend with half a dozen services, a desktop app with offline sync, an admin console, an SDK, Kubernetes infrastructure, Helm charts, Terraform configs, and an AI agent with access to genealogy APIs. Way beyond what one person would reasonably build in their spare time alongside a day job.

But that was the constraint worth testing: could I actually build this with agents?

D'ya Like DAGs?

It turns out you can — if you're careful about how you structure the work.

An AI agent can only handle so much at once (the "context window"). Rather than fighting that constraint, I leaned into it. I broke the project into pieces small enough for an agent to handle in a single session and captured the dependencies between them so they could be built in parallel without stepping on each other.

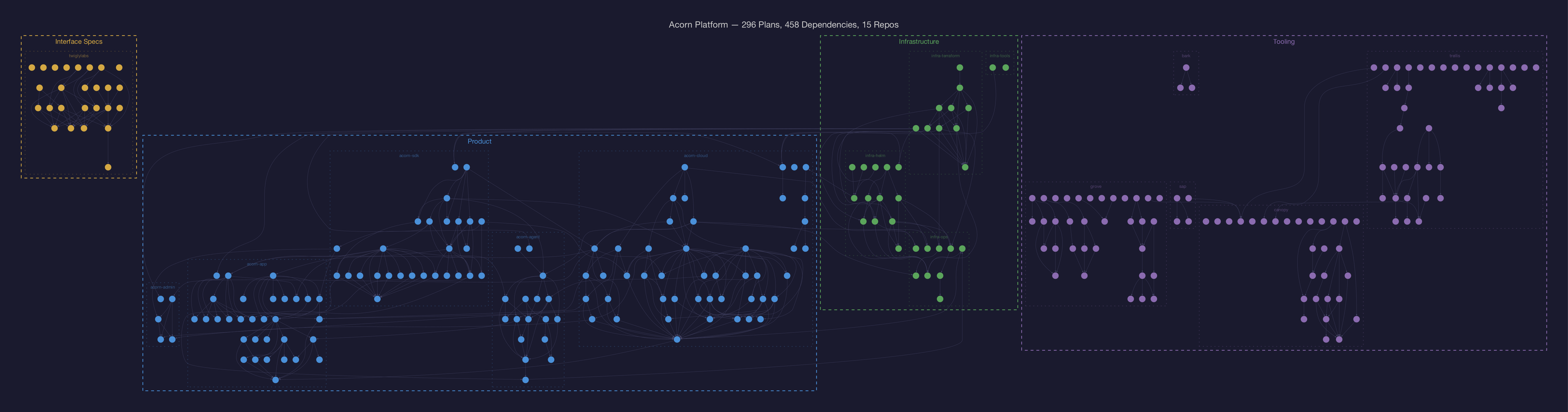

That structure is a DAG — a directed acyclic graph. Each node is a plan. The edges say "build this before that." I built some tooling to manage these plans, track their dependencies, and give me visibility into what was happening. Then I started launching agents against the plans and letting them run.

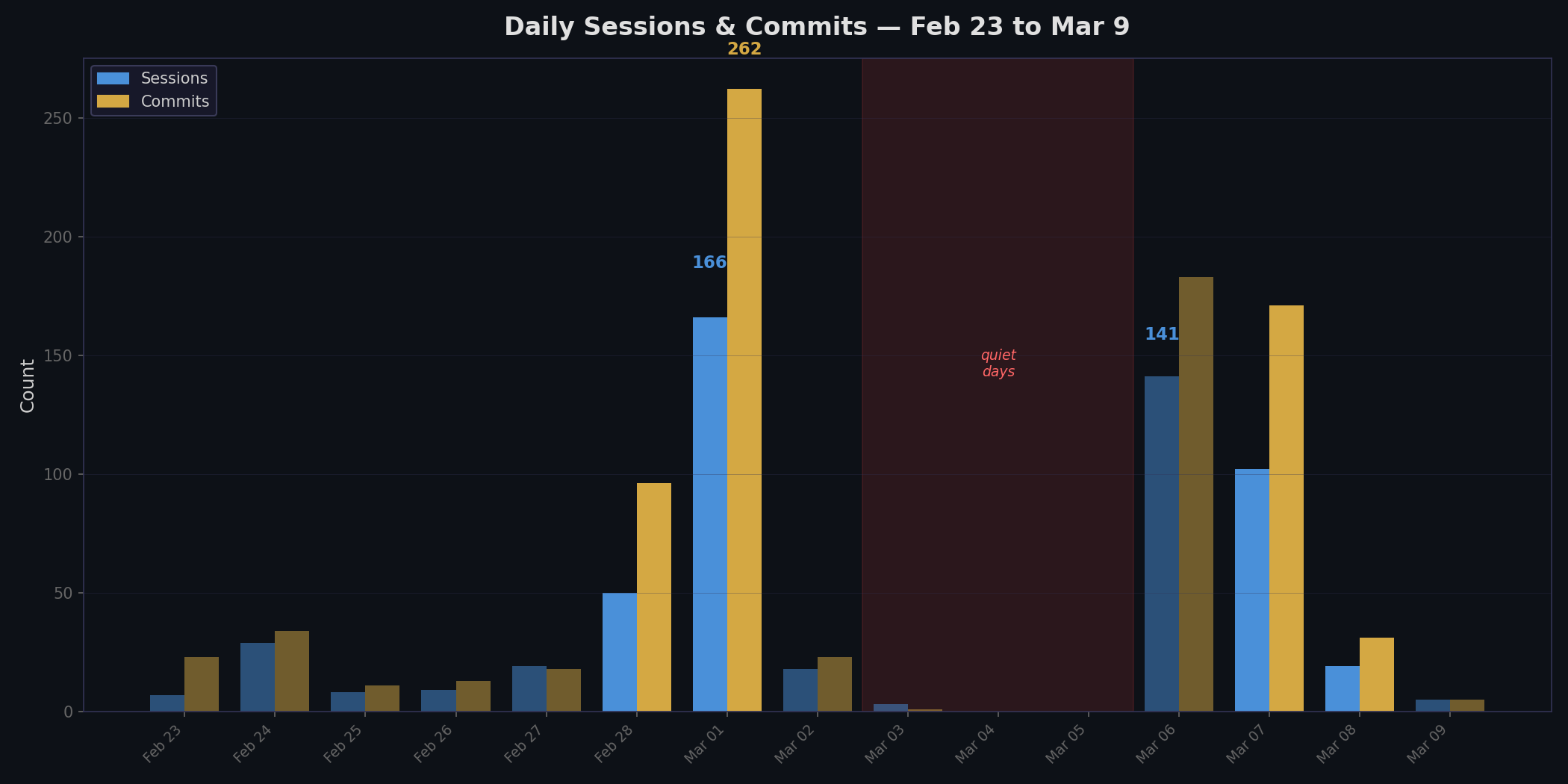

Here's what happened in two weeks:

| Metric | Value |

|---|---|

| Calendar days | 15 (Feb 23 – Mar 9) |

| Repos | 14 |

| Plans in the DAG | 296 |

| Agent sessions | 590 |

| Commits | 866 |

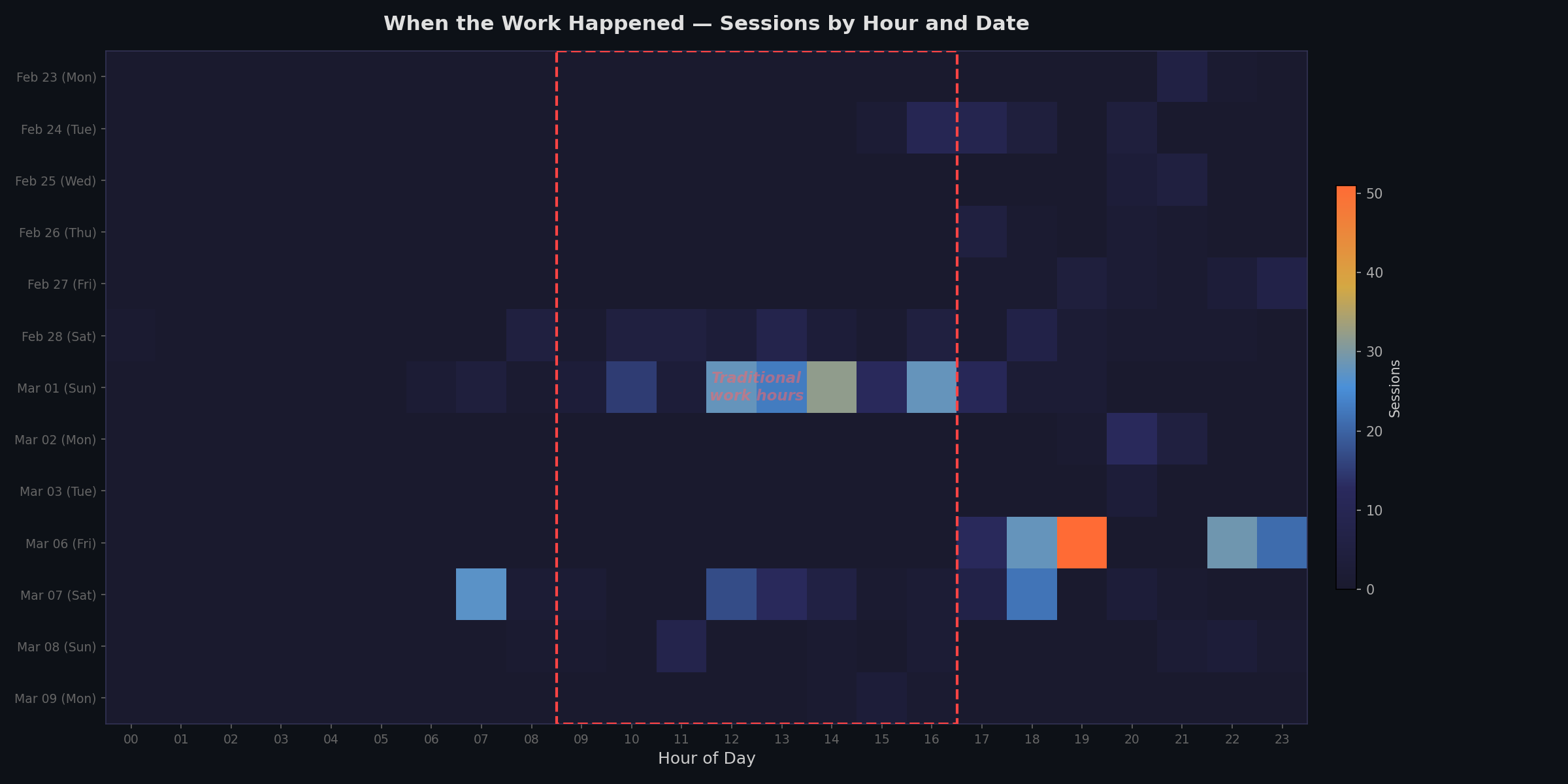

| Sessions outside business hours | 97% |

| Biggest single day | 262 commits (a Sunday) |

| Peak parallelism | 32 concurrent agent sessions |

| Who did this | One person with a day job |

This wasn't a sabbatical or a startup sprint. It was evenings and weekends. Every weekday session started after 5pm. The two biggest implementation days — 262 commits and 183 commits — were a Sunday and a Saturday.

And here's the dependency DAG itself. Each node is roughly one context window's worth of work:

One person can build a complex system if they're disciplined about how they structure the work for the agents.

Now — here's the part where I'm supposed to say "and you can too!" But it's more complicated than that.

The app meets the specification. Every service builds, every test suite passes. But building something with a novel process doesn't mean you rush the release. I'm being deliberate about rolling this out — I want to understand the security and operational story before other people depend on it. Building it was one experiment. Operating it safely is the next one.

And the process wasn't painless. I hit real walls where the approach broke down and I had to rethink. The agents amplified my specifications faithfully. Blind spots and all. That's what I want to dig into.